This week I was listening to an episode of one of my fave conspiracy debunking pods, QAA. It’s been running for years now, but in the early days of QAnon it was a really invaluable resource for diving deep into what was going on on the fringes of the American right, something that’s unfortunately now in all of our faces.

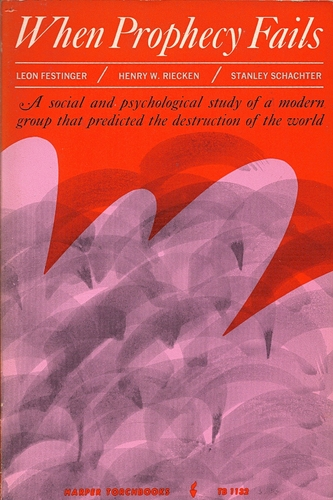

This particular episode was about the famous account of a psych study called “When Prophecy Fails”, published in 1956. I’ve referred to this study several times when I’ve been thinking and writing about online conspiracy thinking in fandom and other spaces. In 1954, a Chicago woman named Dorothy Martin predicted an apocalyptic flood and promised her small circle of followers that flying saucers would arrive to carry them to safety before the waters rose. The aliens didn’t come and the flood didn’t happen. Three psychologists from the University of Minnesota had embedded themselves in the group to watch what happened next, and what they reported was that the believers doubled down. Faced with total disconfirmation, the group didn't dissolve in embarrassment. They started proselytising harder than ever, rationalising the failure as proof that their faith had saved the world.

This account helped launch a really influential concept in social science, which you’ve definitely heard of: cognitive dissonance. The idea that true believers, confronted with evidence that destroys their worldview, will not update — they will dig in deeper. The only problem is that it didn't happen.

A paper published late last year by Thomas Kelly (open source preprint), drawing on newly unsealed archival material, demonstrates that the book's central claims are false and that the authors knew they were false. The cult leader, Martin, publicly recanted. The group disbanded. And it turns out the researchers embedded with the group had manipulated their reactions before and after the event. So the foundational case study for people double down when their beliefs are challenged was a case where the researchers manufactured the doubling down, and when the subjects updated anyway, they wrote it up as if they hadn't.

This is part of something bigger, called the “replication crisis” in social science, where attempts to repeat significant studies fail to get the same results. In terms of changing our minds, it turns out many of the things we thought were true might not be. The “backfire effect”, which is the idea that if you give someone information that debunks their beliefs it actually strengthens them, has also taken a substantial hit. Brendan Nyhan, one of the original researchers, has since walked back the strong interpretation considerably. The emerging consensus is that corrections mostly work, modestly, when people actually receive them. The problem isn't that minds won't update. It's that corrections rarely reach people in a form that sticks, and the effects decay quickly when they do.

So we can change our minds, which makes it all the more interesting that you can spend thirty seconds on the internet and feel like nobody has ever changed their mind about anything.

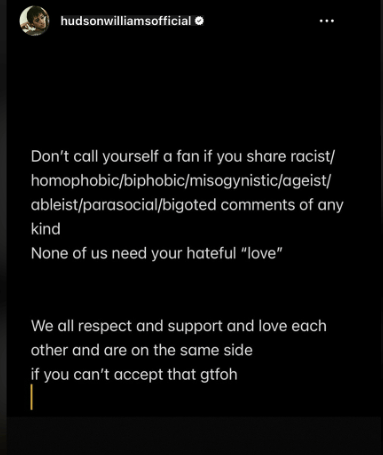

Exhibit A is what happened this month in the Heated Rivalry fandom (what are we, week ten of me talking about this now? shows no signs of me slowing sorry). The short version: fans had been criticising white cast members for not speaking out about racism directed at Hudson Williams online. This was a legitimate complaint. Williams is an Asian-Canadian actor who has been on the receiving end of some genuinely ugly behaviour. Last week, Williams finally responded, posting this on his insta:

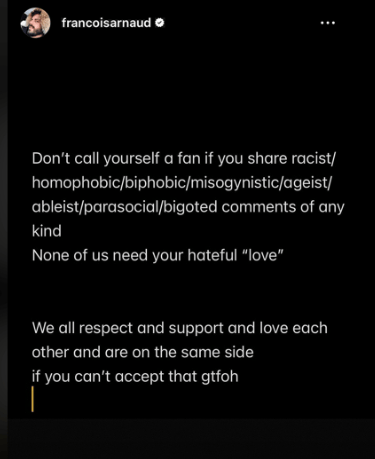

Co-star François Arnaud posted the same:

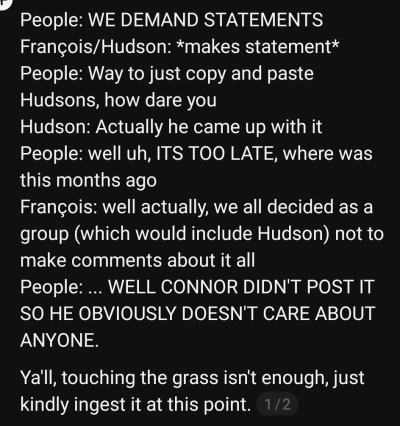

Immediately, the subset of stans who continue to engage in the kind of unhinged behaviour we talked about last month accused Arnaud of just copy/pasting Williams's words. Williams had to come out and confirm that the statement had been Arnaud's idea, and that Williams had agreed to co-post it. Stans then said Arnaud had taken too long to say anything. Arnaud revealed the cast had agreed to a strategy of not engaging before this point. Left with no ground to stand on, they pivoted to criticising a completely different cast member for having done nothing at all.

This is just the most recent example of something that happens a lot online. There is no action Arnaud could have taken that would have been accepted as sufficient. Not posting: proof he doesn't care. Posting: performative, derivative. Williams confirming his sincerity: doesn't count, too slow. The other cast members: next target. The function of the criticism at this point is no longer accountability. It’s something else — the performance of grievance or the social reward that comes from being the person who spotted the problem or the way a community maintains its own sense of moral purity.

The Larries, my (love/hate) fave conspiracists are my canonical example of a belief system that has become structurally immune to evidence. For over a decade, they’ve maintained that Harry Styles and Louis Tomlinson are secretly in a relationship, despite both men's repeated denials, despite Tomlinson dating women and fathering a child, despite Tomlinson himself saying, with visible exhaustion, "there is nothing I can say, there is nothing I can do to dispel the believers of that conspiracy."

Every piece of counter-evidence gets absorbed and reinterpreted: the girlfriend is a plant, the child is a prop, the denial is itself the proof, because of course they'd have to deny it. This is what an unfalsifiable belief looks like in practice. It’s a closed loop where the theory explains everything, including the evidence against it.

But as I often say Larries aren't uniquely irrational. They're operating the same cognitive machinery we all use. It's just that somewhere along the way the belief became so load-bearing — so central to their identity, their friendships, their years of accumulated investment — that no exit remained that didn't cost them everything.

This is the puzzle I’ve been turning over this week. The folk psychology said people can't change their minds; the actual evidence says they can and do. So why does the internet feel like a machine for the production of unfalsifiable positions?

I think part of the answer is structural. Changing your mind in public, on social media, risks alienating the people who are your community. You’re guided by the people around you, whose opinion you care about, and you don’t want to step out of line.

This is what makes the AI psychosis cases described in this recent 404 Media piece so interesting by contrast. David watches his friend Michael disappear into thousands of pages of ChatGPT conversations about quantum entanglement and tetrahedral structures, broken code and spiritual physics. The chatbot convinced him he'd discovered a critical flaw in humanity's understanding of physics. David said it felt like talking to a cult member. But what's strange about Michael's situation isn't that he found a community to reinforce his beliefs — he found the opposite. A system that never pushes back and never tires and never has any stake in whether he updates his thinking or not.

I think these represent two different failure modes in our technological infrastructure. One where the social cost of updating is too high. One where there's nothing to push against at all. In both cases, the risk is that the mind stops moving.

The religions that have struggled most in the internet era are the ones whose internal logic depended on information control. Scientology gatekept its own doctrine behind years of paid auditing — so when former members started posting the confidential materials online, the whole structure became legible at once. The CES Letter — an 84-page document a disillusioned Mormon posted online in 2013 — contained no new research. It was a distillation of material that had existed for decades. The internet didn't discover new problems with Mormon history, but it made the existing problems findable, cross-referenceable, and unavoidable. And in both cases, minds changed on a significant scale.

But here's the thing: former members didn't just find counter-evidence. The internet also let them find each other. Community to land in and people who had already made the journey. The information was almost secondary to the social scaffolding that made updating survivable. Which is exactly what's missing when you try to debunk a conspiracy theory in a public thread or correct someone at a family dinner. The facts might be there. But there's nowhere to land. The Kelly paper ends with a note of dry irony: "If Festinger's theory of cognitive dissonance is right, reappraisal of the value of When Prophecy Fails may be slow. If he is wrong, perhaps reappraisal will be swift."

What the evidence actually suggests is that people change their minds when the social conditions allow it and when the cost of updating is low. They do it when there's a community on the other side or when the belief system required secrecy to function and the secrecy is gone. They don't change their minds in public arguments on platforms where being wrong is permanently visible and being right is its own reward. They certainly don't change their minds in conversation with a system that will agree with whatever they say.

Dorothy Martin recanted and her group dissolved. Seventy years later, we built infrastructure that probably would have kept her going indefinitely.

more good stuff

-

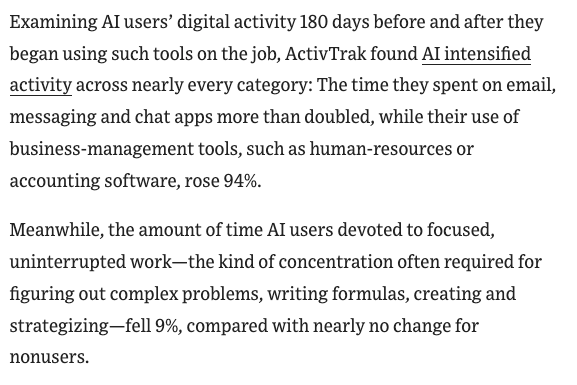

speaking of AI, I was interested in this read from the WSJ on how AIs aren’t reducing workload but making it more intense:

- speaking of the Larries, this week’s The Rest is Entertainment has a good discussion about the scrutiny Harry faces from conspiracists and how that might contribute to what we were talking about last week, saying nothing:

- lots of people have already shared this but it’s worth your time. Rebecca Solnit on how the left’s next hero is already here:

finally, in my lego city

Forward this email to someone with an intransigent belief.

You just read issue #65 of what you love matters. You can also browse the full archives of this newsletter.

Add a comment: